Table of Contents

- 14.1 Introduction to InnoDB

- 14.2 Installing the InnoDB Storage Engine

- 14.3 Upgrading the InnoDB Storage Engine

- 14.4 Downgrading the InnoDB Storage Engine

- 14.5 InnoDB and the ACID Model

- 14.6 InnoDB Multi-Versioning

- 14.7 InnoDB Architecture

- 14.8 InnoDB In-Memory Structures

- 14.9 InnoDB On-Disk Structures

- 14.10 InnoDB Locking and Transaction Model

- 14.11 InnoDB Configuration

- 14.11.1 InnoDB Startup Configuration

- 14.11.2 InnoDB Buffer Pool Configuration

- 14.11.3 Configuring the Memory Allocator for InnoDB

- 14.11.4 Configuring Thread Concurrency for InnoDB

- 14.11.5 Configuring the Number of Background InnoDB I/O Threads

- 14.11.6 Using Asynchronous I/O on Linux

- 14.11.7 Configuring InnoDB I/O Capacity

- 14.11.8 Configuring Spin Lock Polling

- 14.11.9 Purge Configuration

- 14.11.10 Configuring Optimizer Statistics for InnoDB

- 14.12 InnoDB Table Compression

- 14.13 InnoDB File-Format Management

- 14.14 InnoDB Row Formats

- 14.15 InnoDB Disk I/O and File Space Management

- 14.16 InnoDB Fast Index Creation

- 14.17 InnoDB Startup Options and System Variables

- 14.18 InnoDB INFORMATION_SCHEMA Tables

- 14.19 InnoDB Integration with MySQL Performance Schema

- 14.20 InnoDB Monitors

- 14.21 InnoDB Backup and Recovery

- 14.22 InnoDB and MySQL Replication

- 14.23 InnoDB Troubleshooting

InnoDB is a general-purpose storage engine that

balances high reliability and high performance. Starting from MySQL

5.5.5, the default storage engine for new tables is

InnoDB rather than

MyISAM. Unless you have configured a

different default storage engine, issuing a

CREATE TABLE statement without an

ENGINE= clause creates an

InnoDB table. Given this change of default

behavior, MySQL 5.5 might be a logical point to evaluate whether

tables that use MyISAM could benefit from

switching to InnoDB.

InnoDB includes all the features that were part

of the InnoDB Plugin for MySQL 5.1, plus new features specific to

MySQL 5.5 and higher.

The mysql and

INFORMATION_SCHEMA databases that implement

some of the MySQL internals still use MyISAM.

In particular, you cannot switch the grant tables to use

InnoDB.

Key Advantages of InnoDB

Its DML operations follow the ACID model, with transactions featuring commit, rollback, and crash-recovery capabilities to protect user data. See Section 14.5, “InnoDB and the ACID Model” for more information.

Row-level locking and Oracle-style consistent reads increase multi-user concurrency and performance. See Section 14.10, “InnoDB Locking and Transaction Model” for more information.

InnoDBtables arrange your data on disk to optimize queries based on primary keys. EachInnoDBtable has a primary key index called the clustered index that organizes the data to minimize I/O for primary key lookups. See Section 14.9.2.1, “Clustered and Secondary Indexes” for more information.To maintain data integrity,

InnoDBsupportsFOREIGN KEYconstraints. With foreign keys, inserts, updates, and deletes are checked to ensure they do not result in inconsistencies across different tables. See Section 14.9.1.5, “InnoDB and FOREIGN KEY Constraints” for more information.

Table 14.1 InnoDB Storage Engine Features

| Feature | Support |

|---|---|

| B-tree indexes | Yes |

| Backup/point-in-time recovery (Implemented in the server, rather than in the storage engine.) | Yes |

| Cluster database support | No |

| Clustered indexes | Yes |

| Compressed data | Yes |

| Data caches | Yes |

| Encrypted data | Yes (Implemented in the server via encryption functions; In MySQL 5.7 and later, data-at-rest tablespace encryption is supported.) |

| Foreign key support | Yes |

| Full-text search indexes | Yes (InnoDB support for FULLTEXT indexes is available in MySQL 5.6 and later.) |

| Geospatial data type support | Yes |

| Geospatial indexing support | Yes (InnoDB support for geospatial indexing is available in MySQL 5.7 and later.) |

| Hash indexes | No (InnoDB utilizes hash indexes internally for its Adaptive Hash Index feature.) |

| Index caches | Yes |

| Locking granularity | Row |

| MVCC | Yes |

| Replication support (Implemented in the server, rather than in the storage engine.) | Yes |

| Storage limits | 64TB |

| T-tree indexes | No |

| Transactions | Yes |

| Update statistics for data dictionary | Yes |

To compare the features of InnoDB with other

storage engines provided with MySQL, see the Storage

Engine Features table in

Chapter 15, Alternative Storage Engines.

InnoDB Enhancements and New Features

The InnoDB storage engine in MySQL

5.5 releases includes a number performance improvements

that in MySQL 5.1 were only available by installing the

InnoDB Plugin. This latest

InnoDB offers new features, improved performance

and scalability, enhanced reliability and new capabilities for

flexibility and ease of use.

For information about InnoDB enhancements and new

features, refer to:

The

InnoDBenhancements list in Section 1.4, “What Is New in MySQL 5.5”.The Release Notes.

Additional InnoDB Information and Resources

For

InnoDB-related terms and definitions, see the MySQL Glossary.For a forum dedicated to the

InnoDBstorage engine, see MySQL Forums::InnoDB.InnoDBis published under the same GNU GPL License Version 2 (of June 1991) as MySQL. For more information on MySQL licensing, see http://www.mysql.com/company/legal/licensing/.

You may find InnoDB tables beneficial for the

following reasons:

If your server crashes because of a hardware or software issue, regardless of what was happening in the database at the time, you don't need to do anything special after restarting the database.

InnoDBcrash recovery automatically finalizes any changes that were committed before the time of the crash, and undoes any changes that were in process but not committed. Just restart and continue where you left off. This process is now much faster than in MySQL 5.1 and earlier.The

InnoDBstorage engine maintains its own buffer pool that caches table and index data in main memory as data is accessed. Frequently used data is processed directly from memory. This cache applies to many types of information and speeds up processing. On dedicated database servers, up to 80% of physical memory is often assigned to the buffer pool.If you split up related data into different tables, you can set up foreign keys that enforce referential integrity. Update or delete data, and the related data in other tables is updated or deleted automatically. Try to insert data into a secondary table without corresponding data in the primary table, and the bad data gets kicked out automatically.

If data becomes corrupted on disk or in memory, a checksum mechanism alerts you to the bogus data before you use it.

When you design your database with appropriate primary key columns for each table, operations involving those columns are automatically optimized. It is very fast to reference the primary key columns in

WHEREclauses,ORDER BYclauses,GROUP BYclauses, and join operations.Inserts, updates, deletes are optimized by an automatic mechanism called change buffering.

InnoDBnot only allows concurrent read and write access to the same table, it caches changed data to streamline disk I/O.Performance benefits are not limited to giant tables with long-running queries. When the same rows are accessed over and over from a table, a feature called the Adaptive Hash Index takes over to make these lookups even faster, as if they came out of a hash table.

You can freely mix

InnoDBtables with tables from other MySQL storage engines, even within the same statement. For example, you can use a join operation to combine data fromInnoDBandMEMORYtables in a single query.InnoDBhas been designed for CPU efficiency and maximum performance when processing large data volumes.InnoDBtables can handle large quantities of data, even on operating systems where file size is limited to 2GB.

For InnoDB-specific tuning techniques you can

apply in your application code, see

Section 8.5, “Optimizing for InnoDB Tables”.

This section describes best practices when using

InnoDB tables.

Specify a primary key for every table using the most frequently queried column or columns, or an auto-increment value if there is no obvious primary key.

Using joins wherever data is pulled from multiple tables based on identical ID values from those tables. For fast join performance, define foreign keys on the join columns, and declare those columns with the same data type in each table. Adding foreign keys ensures that referenced columns are indexed, which can improve performance. Foreign keys also propagate deletes or updates to all affected tables, and prevent insertion of data in a child table if the corresponding IDs are not present in the parent table.

Turning off autocommit. Committing hundreds of times a second puts a cap on performance (limited by the write speed of your storage device).

Grouping sets of related DML operations into transactions, by bracketing them with

START TRANSACTIONandCOMMITstatements. While you don't want to commit too often, you also don't want to issue huge batches ofINSERT,UPDATE, orDELETEstatements that run for hours without committing.Not using

LOCK TABLESstatements.InnoDBcan handle multiple sessions all reading and writing to the same table at once, without sacrificing reliability or high performance. To get exclusive write access to a set of rows, use theSELECT ... FOR UPDATEsyntax to lock just the rows you intend to update.Enabling the

innodb_file_per_tableoption to put the data and indexes for individual tables into separate files, instead of the system tablespace. This setting is required to use some of the other features, such as table compression.Evaluating whether your data and access patterns benefit from the

InnoDBtable compression feature. You can compressInnoDBtables without sacrificing read/write capability.Running your server with the option

--sql_mode=NO_ENGINE_SUBSTITUTIONto prevent tables being created with a different storage engine if there is an issue with the engine specified in theENGINE=clause ofCREATE TABLE.

To determine whether your server supports

InnoDB:

Issue the

SHOW ENGINESstatement to view the available MySQL storage engines. Look forDEFAULTin theInnoDBline.mysql> SHOW ENGINES;

Alternatively, query the

INFORMATION_SCHEMA.ENGINEStable.mysql> SELECT * FROM INFORMATION_SCHEMA.ENGINES;

(Now that

InnoDBis the default MySQL storage engine, only very specialized environments might not support it.)Issue a

SHOW VARIABLESstatement to confirm thatInnoDBis available.mysql> SHOW VARIABLES LIKE 'have_innodb';

If

InnoDBis not present, you have amysqldbinary that was compiled withoutInnoDBsupport and you need to get a different one.If

InnoDBis present but disabled, go back through your startup options and configuration file and get rid of any--skip-innodboption.

The ability to use the InnoDB

table compression feature

introduced in MySQL 5.5 and the new row format

require the use of a new InnoDB file format

called Barracuda. The

previous file format, used by the built-in InnoDB in MySQL 5.1 and earlier, is now called

Antelope and does not support

these features, but does support the other features introduced

with the InnoDB storage engine.

The InnoDB storage engine is upward compatible from standard

InnoDB as built in to, and distributed with,

MySQL. Existing databases can be used with the

InnoDB Storage Engine for MySQL. The new parameter

innodb_file_format can help

protect upward and downward compatibility between

InnoDB versions and database files, allowing

users to enable or disable use of new features that can only be

used with certain versions of InnoDB.

InnoDB since version 5.0.21 has a safety

feature that prevents it from opening tables that are in an

unknown format. However, the system tablespace may contain

references to new-format tables that confuse the built-in InnoDB in MySQL 5.1 and earlier. These

references are cleared in a

slow shutdown.

With previous versions of InnoDB, no error

would be returned until you try to access a table that is in a

format “too new” for the software. To provide early

feedback, InnoDB now checks the system

tablespace before startup to ensure that the file format used in

the database is supported by the storage engine. See

Section 14.13.2.1, “Compatibility Check When InnoDB Is Started” for

the details.

If InnoDB is not your default storage engine,

you can determine if your database server or applications work

correctly with InnoDB by restarting the server

with

--default-storage-engine=InnoDB

defined on the command line or with

default-storage-engine=innodb

defined in the [mysqld] section of your MySQL

server option file.

Since changing the default storage engine only affects new tables

as they are created, run all your application installation and

setup steps to confirm that everything installs properly. Then

exercise all the application features to make sure all the data

loading, editing, and querying features work. If a table relies on

a feature that is specific to another storage engine, you will

receive an error; add the

ENGINE=

clause to the other_engine_nameCREATE TABLE statement to avoid

the error.

If you did not make a deliberate decision about the storage

engine, and you want to preview how certain tables work when

created using InnoDB, issue the command

ALTER TABLE table_name ENGINE=InnoDB; for each

table. Or, to run test queries and other statements without

disturbing the original table, make a copy:

CREATE TABLE InnoDB_Table (...) ENGINE=InnoDB AS SELECT * FROM other_engine_table;

To assess performance with a full application under a realistic workload, install the latest MySQL server and run benchmarks.

Test the full application lifecycle, from installation, through heavy usage, and server restart. Kill the server process while the database is busy to simulate a power failure, and verify that the data is recovered successfully when you restart the server.

Test any replication configurations, especially if you use different MySQL versions and options on the master and slaves.

Oracle recommends InnoDB as the preferred

storage engine for typical database applications, from single-user

wikis and blogs running on a local system, to high-end

applications pushing the limits of performance. As of MySQL

5.5, InnoDB is the default storage

engine for new tables.

If you do not want to use InnoDB tables:

Start the server with the

--innodb=OFFor--skip-innodboption to disable theInnoDBstorage engine.Because the default storage engine is

InnoDB, the server will not start unless you also change thedefault_storage_enginesystem variable to set the default to some other available engine.To prevent the server from crashing when the

InnoDB-relatedinformation_schematables are queried, also disable the plugins associated with those tables. Specify in the[mysqld]section of the MySQL configuration file:loose-innodb-trx=0 loose-innodb-locks=0 loose-innodb-lock-waits=0 loose-innodb-cmp=0 loose-innodb-cmp-per-index=0 loose-innodb-cmp-per-index-reset=0 loose-innodb-cmp-reset=0 loose-innodb-cmpmem=0 loose-innodb-cmpmem-reset=0 loose-innodb-buffer-page=0 loose-innodb-buffer-page-lru=0 loose-innodb-buffer-pool-stats=0

Oracle acknowledges that certain Third Party and Open Source software has been used to develop or is incorporated in the InnoDB storage engine. This appendix includes required third-party license information.

Oracle gratefully acknowledges the following contributions from Google, Inc. to improve InnoDB performance:

Replacing InnoDB's use of Pthreads mutexes with calls to GCC atomic builtins. This change means that InnoDB mutex and rw-lock operations take less CPU time, and improves throughput on those platforms where the atomic operations are available.

Controlling InnoDB

I/Ocapacity, as discussed in Section 14.11.7, “Configuring InnoDB I/O Capacity”. InnoDB performs various tasks in the background. Historically, InnoDB has used a hard coded value as the totalI/Ocapacity of the server. With this change, user can control the number ofI/Ooperations that can be performed per second based on their own workload.

Changes from the Google contributions were incorporated in the

following source code files: btr0cur.c,

btr0sea.c, buf0buf.c,

buf0buf.ic, ha_innodb.cc,

log0log.c, log0log.h,

os0sync.h, row0sel.c,

srv0srv.c, srv0srv.h,

srv0start.c, sync0arr.c,

sync0rw.c, sync0rw.h,

sync0rw.ic, sync0sync.c,

sync0sync.h, sync0sync.ic,

and univ.i.

These contributions are incorporated subject to the conditions

contained in the file COPYING.Google, which are

reproduced here.

Copyright (c) 2008, 2009, Google Inc.

All rights reserved.

Redistribution and use in source and binary forms, with or without

modification, are permitted provided that the following conditions

are met:

* Redistributions of source code must retain the above copyright

notice, this list of conditions and the following disclaimer.

* Redistributions in binary form must reproduce the above

copyright notice, this list of conditions and the following

disclaimer in the documentation and/or other materials

provided with the distribution.

* Neither the name of the Google Inc. nor the names of its

contributors may be used to endorse or promote products

derived from this software without specific prior written

permission.

THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS

"AS IS" AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT

LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS

FOR A PARTICULAR PURPOSE ARE DISCLAIMED. IN NO EVENT SHALL THE

COPYRIGHT OWNER OR CONTRIBUTORS BE LIABLE FOR ANY DIRECT, INDIRECT,

INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING,

BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES;

LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER

CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT

LIABILITY, OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN

ANY WAY OUT OF THE USE OF THIS SOFTWARE, EVEN IF ADVISED OF THE

POSSIBILITY OF SUCH DAMAGE.

Oracle gratefully acknowledges the contribution of Percona, Inc. to improve InnoDB performance by implementing configurable background threads, as discussed in Section 14.11.5, “Configuring the Number of Background InnoDB I/O Threads”. InnoDB uses background threads to service various types of I/O requests. The change provides another way to make InnoDB more scalable on high end systems.

Changes from the Percona, Inc. contribution were incorporated in

the following source code files: ha_innodb.cc,

os0file.c, os0file.h,

srv0srv.c, srv0srv.h, and

srv0start.c.

This contribution is incorporated subject to the conditions

contained in the file COPYING.Percona, which

are reproduced here.

Copyright (c) 2008, 2009, Percona Inc.

All rights reserved.

Redistribution and use in source and binary forms, with or without

modification, are permitted provided that the following conditions

are met:

* Redistributions of source code must retain the above copyright

notice, this list of conditions and the following disclaimer.

* Redistributions in binary form must reproduce the above

copyright notice, this list of conditions and the following

disclaimer in the documentation and/or other materials

provided with the distribution.

* Neither the name of the Percona Inc. nor the names of its

contributors may be used to endorse or promote products

derived from this software without specific prior written

permission.

THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS

"AS IS" AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT

LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS

FOR A PARTICULAR PURPOSE ARE DISCLAIMED. IN NO EVENT SHALL THE

COPYRIGHT OWNER OR CONTRIBUTORS BE LIABLE FOR ANY DIRECT, INDIRECT,

INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING,

BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES;

LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER

CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT

LIABILITY, OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN

ANY WAY OUT OF THE USE OF THIS SOFTWARE, EVEN IF ADVISED OF THE

POSSIBILITY OF SUCH DAMAGE.

Oracle gratefully acknowledges the following contributions from Sun Microsystems, Inc. to improve InnoDB performance:

Introducing the

PAUSEinstruction inside spin loops. This change increases performance in high concurrency, CPU-bound workloads.Enabling inlining of functions and prefetch with Sun Studio.

Changes from the Sun Microsystems, Inc. contribution were

incorporated in the following source code files:

univ.i, ut0ut.c, and

ut0ut.h.

This contribution is incorporated subject to the conditions

contained in the file COPYING.Sun_Microsystems,

which are reproduced here.

Copyright (c) 2009, Sun Microsystems, Inc.

All rights reserved.

Redistribution and use in source and binary forms, with or without

modification, are permitted provided that the following conditions

are met:

* Redistributions of source code must retain the above copyright

notice, this list of conditions and the following disclaimer.

* Redistributions in binary form must reproduce the above

copyright notice, this list of conditions and the following

disclaimer in the documentation and/or other materials

provided with the distribution.

* Neither the name of Sun Microsystems, Inc. nor the names of its

contributors may be used to endorse or promote products

derived from this software without specific prior written

permission.

THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS

"AS IS" AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT

LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS

FOR A PARTICULAR PURPOSE ARE DISCLAIMED. IN NO EVENT SHALL THE

COPYRIGHT OWNER OR CONTRIBUTORS BE LIABLE FOR ANY DIRECT, INDIRECT,

INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING,

BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES;

LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER

CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT

LIABILITY, OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN

ANY WAY OUT OF THE USE OF THIS SOFTWARE, EVEN IF ADVISED OF THE

POSSIBILITY OF SUCH DAMAGE.

When you use the InnoDB storage engine 1.1 and above, with MySQL 5.5 and above, you do not need to do anything special to install: everything comes configured as part of the MySQL source and binary distributions. This is a change from earlier releases of the InnoDB Plugin, where you were required to match up MySQL and InnoDB version numbers and update your build and configuration processes.

The InnoDB storage engine is included in the MySQL distribution, starting from MySQL 5.1.38. From MySQL 5.1.46 and up, this is the only download location for the InnoDB storage engine; it is not available from the InnoDB website.

If you used any scripts or configuration files with the earlier

InnoDB storage engine from the InnoDB website, be aware that the filename

of the shared library as supplied by MySQL is

ha_innodb_plugin.so or

ha_innodb_plugin.dll, as opposed to

ha_innodb.so or ha_innodb.dll

in the older Plugin downloaded from the InnoDB website. You might

need to change the applicable file names in your startup or

configuration scripts.

Because the InnoDB storage engine has now replaced the built-in InnoDB,

you no longer need to specify options like

--ignore-builtin-innodb and

--plugin-load during startup.

To take best advantage of current InnoDB features, we recommend specifying the following options in your configuration file:

innodb_file_per_table=1 innodb_file_format=barracuda innodb_strict_mode=1

For information about these features, see

Section 14.17, “InnoDB Startup Options and System Variables”,

Section 14.13, “InnoDB File-Format Management”, and

innodb_strict_mode. You might need

to continue to use the previous values for these parameters in some

replication and similar configurations involving both new and older

versions of MySQL.

Prior to MySQL 5.5, some upgrade scenarios involved upgrading the separate instance of InnoDB known as the InnoDB Plugin. In MySQL 5.5 and higher, the features of the InnoDB Plugin have been folded back into built-in InnoDB, so the upgrade procedure for InnoDB is the same as the one for the MySQL server. For details, see Section 2.11, “Upgrading MySQL”.

Prior to MySQL 5.5, some downgrade scenarios involved switching the separate instance of InnoDB known as the InnoDB Plugin back to the built-in InnoDB storage engine. In MySQL 5.5 and higher, the features of the InnoDB Plugin have been folded back into built-in InnoDB, so the downgrade procedure for InnoDB is the same as the one for the MySQL server. For details, see Section 2.12, “Downgrading MySQL”.

The ACID model is a set of database

design principles that emphasize aspects of reliability that are

important for business data and mission-critical applications. MySQL

includes components such as the InnoDB storage

engine that adhere closely to the ACID model, so that data is not

corrupted and results are not distorted by exceptional conditions

such as software crashes and hardware malfunctions. When you rely on

ACID-compliant features, you do not need to reinvent the wheel of

consistency checking and crash recovery mechanisms. In cases where

you have additional software safeguards, ultra-reliable hardware, or

an application that can tolerate a small amount of data loss or

inconsistency, you can adjust MySQL settings to trade some of the

ACID reliability for greater performance or throughput.

The following sections discuss how MySQL features, in particular the

InnoDB storage engine, interact with the

categories of the ACID model:

A: atomicity.

C: consistency.

I:: isolation.

D: durability.

Atomicity

The atomicity aspect of the ACID

model mainly involves InnoDB

transactions. Related MySQL

features include:

Consistency

The consistency aspect of the ACID

model mainly involves internal InnoDB processing

to protect data from crashes. Related MySQL features include:

InnoDBdoublewrite buffer.InnoDBcrash recovery.

Isolation

The isolation aspect of the ACID

model mainly involves InnoDB

transactions, in particular

the isolation level that

applies to each transaction. Related MySQL features include:

Autocommit setting.

SET ISOLATION LEVELstatement.The low-level details of

InnoDBlocking. During performance tuning, you see these details throughINFORMATION_SCHEMAtables.

Durability

The durability aspect of the ACID model involves MySQL software features interacting with your particular hardware configuration. Because of the many possibilities depending on the capabilities of your CPU, network, and storage devices, this aspect is the most complicated to provide concrete guidelines for. (And those guidelines might take the form of buy “new hardware”.) Related MySQL features include:

InnoDBdoublewrite buffer, turned on and off by theinnodb_doublewriteconfiguration option.Configuration option

innodb_flush_log_at_trx_commit.Configuration option

sync_binlog.Configuration option

innodb_file_per_table.Write buffer in a storage device, such as a disk drive, SSD, or RAID array.

Battery-backed cache in a storage device.

The operating system used to run MySQL, in particular its support for the

fsync()system call.Uninterruptible power supply (UPS) protecting the electrical power to all computer servers and storage devices that run MySQL servers and store MySQL data.

Your backup strategy, such as frequency and types of backups, and backup retention periods.

For distributed or hosted data applications, the particular characteristics of the data centers where the hardware for the MySQL servers is located, and network connections between the data centers.

InnoDB is a

multi-versioned storage engine: it

keeps information about old versions of changed rows, to support

transactional features such as concurrency and

rollback. This information is

stored in the tablespace in a data structure called a

rollback segment (after

an analogous data structure in Oracle). InnoDB

uses the information in the rollback segment to perform the undo

operations needed in a transaction rollback. It also uses the

information to build earlier versions of a row for a

consistent read.

Internally, InnoDB adds three fields to each row

stored in the database. A 6-byte DB_TRX_ID field

indicates the transaction identifier for the last transaction that

inserted or updated the row. Also, a deletion is treated internally

as an update where a special bit in the row is set to mark it as

deleted. Each row also contains a 7-byte

DB_ROLL_PTR field called the roll pointer. The

roll pointer points to an undo log record written to the rollback

segment. If the row was updated, the undo log record contains the

information necessary to rebuild the content of the row before it

was updated. A 6-byte DB_ROW_ID field contains a

row ID that increases monotonically as new rows are inserted. If

InnoDB generates a clustered index automatically,

the index contains row ID values. Otherwise, the

DB_ROW_ID column does not appear in any index.

Undo logs in the rollback segment are divided into insert and update

undo logs. Insert undo logs are needed only in transaction rollback

and can be discarded as soon as the transaction commits. Update undo

logs are used also in consistent reads, but they can be discarded

only after there is no transaction present for which

InnoDB has assigned a snapshot that in a

consistent read could need the information in the update undo log to

build an earlier version of a database row.

Commit your transactions regularly, including those transactions

that issue only consistent reads. Otherwise,

InnoDB cannot discard data from the update undo

logs, and the rollback segment may grow too big, filling up your

tablespace.

The physical size of an undo log record in the rollback segment is typically smaller than the corresponding inserted or updated row. You can use this information to calculate the space needed for your rollback segment.

In the InnoDB multi-versioning scheme, a row is

not physically removed from the database immediately when you delete

it with an SQL statement. InnoDB only physically

removes the corresponding row and its index records when it discards

the update undo log record written for the deletion. This removal

operation is called a purge, and

it is quite fast, usually taking the same order of time as the SQL

statement that did the deletion.

If you insert and delete rows in smallish batches at about the same

rate in the table, the purge thread can start to lag behind and the

table can grow bigger and bigger because of all the

“dead” rows, making everything disk-bound and very

slow. In such a case, throttle new row operations, and allocate more

resources to the purge thread by tuning the

innodb_max_purge_lag system

variable. See Section 14.17, “InnoDB Startup Options and System Variables” for more

information.

InnoDB multiversion concurrency control (MVCC)

treats secondary indexes differently than clustered indexes.

Records in a clustered index are updated in-place, and their

hidden system columns point undo log entries from which earlier

versions of records can be reconstructed. Unlike clustered index

records, secondary index records do not contain hidden system

columns nor are they updated in-place.

When a secondary index column is updated, old secondary index

records are delete-marked, new records are inserted, and

delete-marked records are eventually purged. When a secondary

index record is delete-marked or the secondary index page is

updated by a newer transaction, InnoDB looks up

the database record in the clustered index. In the clustered

index, the record's DB_TRX_ID is checked, and

the correct version of the record is retrieved from the undo log

if the record was modified after the reading transaction was

initiated.

If a secondary index record is marked for deletion or the

secondary index page is updated by a newer transaction, the

covering index

technique is not used. Instead of returning values from the index

structure, InnoDB looks up the record in the

clustered index.

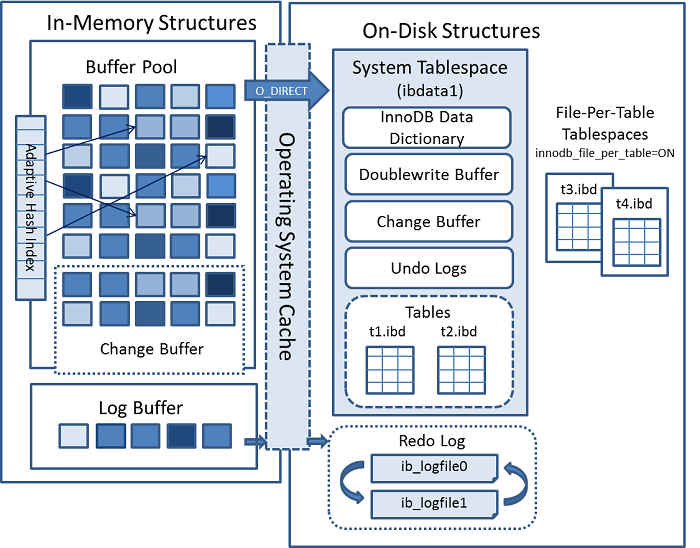

The following diagram shows in-memory and on-disk structures that

comprise the InnoDB storage engine

architecture. For information about each structure, see

Section 14.8, “InnoDB In-Memory Structures”, and

Section 14.9, “InnoDB On-Disk Structures”.

This section describes InnoDB in-memory

structures and related topics.

The buffer pool is an area in main memory where

InnoDB caches table and index data as it is

accessed. The buffer pool permits frequently used data to be

processed directly from memory, which speeds up processing. On

dedicated servers, up to 80% of physical memory is often assigned to

the buffer pool.

For efficiency of high-volume read operations, the buffer pool is divided into pages that can potentially hold multiple rows. For efficiency of cache management, the buffer pool is implemented as a linked list of pages; data that is rarely used is aged out of the cache using a variation of the LRU algorithm.

Knowing how to take advantage of the buffer pool to keep frequently accessed data in memory is an important aspect of MySQL tuning.

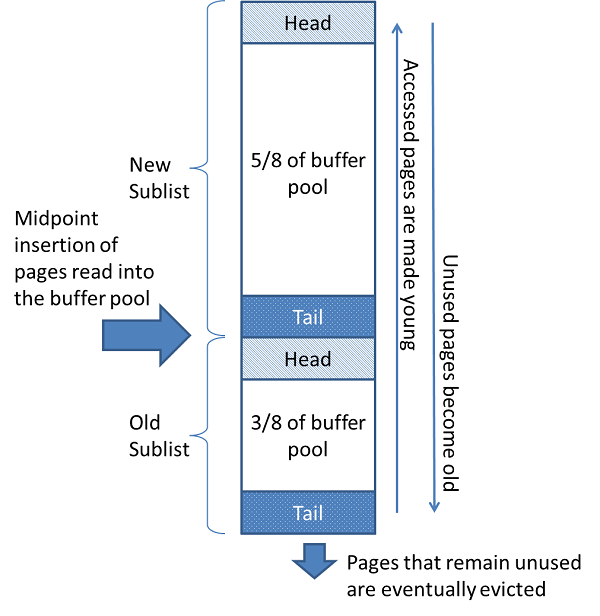

The buffer pool is managed as a list using a variation of the least recently used (LRU) algorithm. When room is needed to add a new page to the buffer pool, the least recently used page is evicted and a new page is added to the middle of the list. This midpoint insertion strategy treats the list as two sublists:

At the head, a sublist of new (“young”) pages that were accessed recently

At the tail, a sublist of old pages that were accessed less recently

The algorithm keeps heavily pages in the new sublist. The old sublist contains less-used pages; these pages are candidates for eviction.

By default, the algorithm operates as follows:

3/8 of the buffer pool is devoted to the old sublist.

The midpoint of the list is the boundary where the tail of the new sublist meets the head of the old sublist.

When

InnoDBreads a page into the buffer pool, it initially inserts it at the midpoint (the head of the old sublist). A page can be read because it is required for a user-initiated operation such as an SQL query, or as part of a read-ahead operation performed automatically byInnoDB.Accessing a page in the old sublist makes it “young”, moving it to the head of the new sublist. If the page was read because it was required by a user-initiated operation, the first access occurs immediately and the page is made young. If the page was read due to a read-ahead operation, the first access does not occur immediately, and might not occur at all before the page is evicted.

As the database operates, pages in the buffer pool that are not accessed “age” by moving toward the tail of the list. Pages in both the new and old sublists age as other pages are made new. Pages in the old sublist also age as pages are inserted at the midpoint. Eventually, a page that remains unused reaches the tail of the old sublist and is evicted.

By default, pages read by queries are immediately moved into the

new sublist, meaning they stay in the buffer pool longer. A table

scan, performed for a mysqldump operation or a

SELECT statement with no

WHERE clause, for example, can bring a large

amount of data into the buffer pool and evict an equivalent amount

of older data, even if the new data is never used again.

Similarly, pages that are loaded by the read-ahead background

thread and accessed only once are moved to the head of the new

list. These situations can push frequently used pages to the old

sublist where they become subject to eviction. For information

about optimizing this behavior, see

Section 14.11.2.2, “Making the Buffer Pool Scan Resistant”, and

Section 14.11.2.3, “Configuring InnoDB Buffer Pool Prefetching (Read-Ahead)”.

InnoDB Standard Monitor output contains several

fields in the BUFFER POOL AND MEMORY section

regarding operation of the buffer pool LRU algorithm. For details,

see Monitoring the Buffer Pool Using the InnoDB Standard Monitor.

You can configure the various aspects of the buffer pool to improve performance.

Ideally, you set the size of the buffer pool to as large a value as practical, leaving enough memory for other processes on the server to run without excessive paging. The larger the buffer pool, the more

InnoDBacts like an in-memory database, reading data from disk once and then accessing the data from memory during subsequent reads. Buffer pool size is configured using theinnodb_buffer_pool_sizeconfiguration option.On 64-bit systems with sufficient memory, you can split the buffer pool into multiple parts to minimize contention for memory structures among concurrent operations. For details, see Section 14.11.2.1, “Configuring Multiple Buffer Pool Instances”.

You can keep frequently accessed data in memory regardless of sudden spikes of activity from operations that would bring large amounts of infrequently accessed data into the buffer pool. For details, see Section 14.11.2.2, “Making the Buffer Pool Scan Resistant”.

You can control when and how to perform read-ahead requests to prefetch pages into the buffer pool asynchronously in anticipation that the pages will be needed soon. For details, see Section 14.11.2.3, “Configuring InnoDB Buffer Pool Prefetching (Read-Ahead)”.

You can control when background flushing occurs and whether or not the rate of flushing is dynamically adjusted based on workload. For details, see Section 14.11.2.4, “Configuring Buffer Pool Flushing”.

InnoDB Standard Monitor output, which can be

accessed using

SHOW

ENGINE INNODB STATUS, provides metrics regarding

operation of the buffer pool. Buffer pool metrics are located in

the BUFFER POOL AND MEMORY section of

InnoDB Standard Monitor output and appear

similar to the following:

---------------------- BUFFER POOL AND MEMORY ---------------------- Total memory allocated 2217738240; in additional pool allocated 0 Dictionary memory allocated 121719 Buffer pool size 131072 Free buffers 129937 Database pages 1134 Old database pages 211 Modified db pages 187 Pending reads 0 Pending writes: LRU 0, flush list 0, single page 0 Pages made young 0, not young 0 0.00 youngs/s, 0.00 non-youngs/s Pages read 426, created 708, written 768 0.00 reads/s, 40.99 creates/s, 50.49 writes/s Buffer pool hit rate 1000 / 1000, young-making rate 0 / 1000 not 0 / 1000 Pages read ahead 0.00/s, evicted without access 0.00/s, Random read ahead 0.00/s LRU len: 1134, unzip_LRU len: 0 I/O sum[0]:cur[0], unzip sum[0]:cur[0]

The following table describes buffer pool metrics reported by the

InnoDB Standard Monitor.

Per second averages provided in InnoDB

Standard Monitor output are based on the elapsed time since

InnoDB Standard Monitor output was last

printed.

Table 14.2 InnoDB Buffer Pool Metrics

| Name | Description |

|---|---|

| Total memory allocated | The total memory allocated for the buffer pool in bytes. |

| additional pool allocated | The total memory allocated for the additional pool in bytes. |

| Dictionary memory allocated | The total memory allocated for the InnoDB data

dictionary in bytes. |

| Buffer pool size | The total size in pages allocated to the buffer pool. |

| Free buffers | The total size in pages of the buffer pool free list. |

| Database pages | The total size in pages of the buffer pool LRU list. |

| Old database pages | The total size in pages of the buffer pool old LRU sublist. |

| Modified db pages | The current number of pages modified in the buffer pool. |

| Pending reads | The number of buffer pool pages waiting to be read into the buffer pool. |

| Pending writes LRU | The number of old dirty pages within the buffer pool to be written from the bottom of the LRU list. |

| Pending writes flush list | The number of buffer pool pages to be flushed during checkpointing. |

| Pending writes single page | The number of pending independent page writes within the buffer pool. |

| Pages made young | The total number of pages made young in the buffer pool LRU list (moved to the head of sublist of “new” pages). |

| Pages made not young | The total number of pages not made young in the buffer pool LRU list (pages that have remained in the “old” sublist without being made young). |

| youngs/s | The per second average of accesses to old pages in the buffer pool LRU list that have resulted in making pages young. See the notes that follow this table for more information. |

| non-youngs/s | The per second average of accesses to old pages in the buffer pool LRU list that have resulted in not making pages young. See the notes that follow this table for more information. |

| Pages read | The total number of pages read from the buffer pool. |

| Pages created | The total number of pages created within the buffer pool. |

| Pages written | The total number of pages written from the buffer pool. |

| reads/s | The per second average number of buffer pool page reads per second. |

| creates/s | The per second average number of buffer pool pages created per second. |

| writes/s | The per second average number of buffer pool page writes per second. |

| Buffer pool hit rate | The buffer pool page hit rate for pages read from the buffer pool memory vs from disk storage. |

| young-making rate | The average hit rate at which page accesses have resulted in making pages young. See the notes that follow this table for more information. |

| not (young-making rate) | The average hit rate at which page accesses have not resulted in making pages young. See the notes that follow this table for more information. |

| Pages read ahead | The per second average of read ahead operations. |

| Pages evicted without access | The per second average of the pages evicted without being accessed from the buffer pool. |

| Random read ahead | The per second average of random read ahead operations. |

| LRU len | The total size in pages of the buffer pool LRU list. |

| unzip_LRU len | The total size in pages of the buffer pool unzip_LRU list. |

| I/O sum | The total number of buffer pool LRU list pages accessed, for the last 50 seconds. |

| I/O cur | The total number of buffer pool LRU list pages accessed. |

| I/O unzip sum | The total number of buffer pool unzip_LRU list pages accessed. |

| I/O unzip cur | The total number of buffer pool unzip_LRU list pages accessed. |

Notes:

The

youngs/smetric is applicable only to old pages. It is based on the number of accesses to pages and not the number of pages. There can be multiple accesses to a given page, all of which are counted. If you see very lowyoungs/svalues when there are no large scans occurring, you might need to reduce the delay time or increase the percentage of the buffer pool used for the old sublist. Increasing the percentage makes the old sublist larger, so pages in that sublist take longer to move to the tail, which increases the likelihood that those pages will be accessed again and made young.The

non-youngs/smetric is applicable only to old pages. It is based on the number of accesses to pages and not the number of pages. There can be multiple accesses to a given page, all of which are counted. If you do not see a highernon-youngs/svalue when performing large table scans (and a higheryoungs/svalue), increase the delay value.The

young-makingrate accounts for accesses to all buffer pool pages, not just accesses to pages in the old sublist. Theyoung-makingrate andnotrate do not normally add up to the overall buffer pool hit rate. Page hits in the old sublist cause pages to move to the new sublist, but page hits in the new sublist cause pages to move to the head of the list only if they are a certain distance from the head.not (young-making rate)is the average hit rate at which page accesses have not resulted in making pages young due to the delay defined byinnodb_old_blocks_timenot being met, or due to page hits in the new sublist that did not result in pages being moved to the head. This rate accounts for accesses to all buffer pool pages, not just accesses to pages in the old sublist.

Buffer pool server status

variables and the

INNODB_BUFFER_POOL_STATS table

provide many of the same buffer pool metrics found in

InnoDB Standard Monitor output. For more

information, see

Example 14.6, “Querying the INNODB_BUFFER_POOL_STATS Table”.

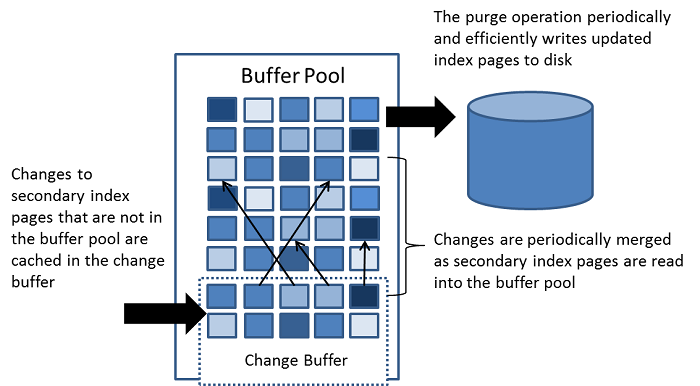

The change buffer is a special data structure that caches changes to

secondary index pages

when those pages are not in the

buffer pool. The buffered

changes, which may result from

INSERT,

UPDATE, or

DELETE operations (DML), are merged

later when the pages are loaded into the buffer pool by other read

operations.

Unlike clustered indexes, secondary indexes are usually nonunique, and inserts into secondary indexes happen in a relatively random order. Similarly, deletes and updates may affect secondary index pages that are not adjacently located in an index tree. Merging cached changes at a later time, when affected pages are read into the buffer pool by other operations, avoids substantial random access I/O that would be required to read secondary index pages into the buffer pool from disk.

Periodically, the purge operation that runs when the system is mostly idle, or during a slow shutdown, writes the updated index pages to disk. The purge operation can write disk blocks for a series of index values more efficiently than if each value were written to disk immediately.

Change buffer merging may take several hours when there are many affected rows and numerous secondary indexes to update. During this time, disk I/O is increased, which can cause a significant slowdown for disk-bound queries. Change buffer merging may also continue to occur after a transaction is committed, and even after a server shutdown and restart (see Section 14.23.2, “Forcing InnoDB Recovery” for more information).

In memory, the change buffer occupies part of the buffer pool. On disk, the change buffer is part of the system tablespace, where index changes are buffered when the database server is shut down.

The type of data cached in the change buffer is governed by the

innodb_change_buffering variable.

For more information, see

Configuring Change Buffering.

For answers to frequently asked questions about the change buffer, see Section A.15, “MySQL 5.5 FAQ: InnoDB Change Buffer”.

When INSERT,

UPDATE, and

DELETE operations are performed on

a table, the values of indexed columns (particularly the values of

secondary keys) are often in an unsorted order, requiring

substantial I/O to bring secondary indexes up to date. The

change buffer caches

changes to secondary index entries when the relevant

page is not in the

buffer pool, thus avoiding

expensive I/O operations by not immediately reading in the page

from disk. The buffered changes are merged when the page is loaded

into the buffer pool, and the updated page is later flushed to

disk. The InnoDB main thread merges buffered

changes when the server is nearly idle, and during a

slow shutdown.

Because it can result in fewer disk reads and writes, the change buffer feature is most valuable for workloads that are I/O-bound, for example applications with a high volume of DML operations such as bulk inserts.

However, the change buffer occupies a part of the buffer pool, reducing the memory available to cache data pages. If the working set almost fits in the buffer pool, or if your tables have relatively few secondary indexes, it may be useful to disable change buffering. If the working data set fits entirely within the buffer pool, change buffering does not impose extra overhead, because it only applies to pages that are not in the buffer pool.

You can control the extent to which InnoDB

performs change buffering using the

innodb_change_buffering

configuration parameter. You can enable or disable buffering for

inserts, delete operations (when index records are initially

marked for deletion) and purge operations (when index records are

physically deleted). An update operation is a combination of an

insert and a delete. The default

innodb_change_buffering value is

all.

Permitted innodb_change_buffering

values include:

allThe default value: buffer inserts, delete-marking operations, and purges.

noneDo not buffer any operations.

insertsBuffer insert operations.

deletesBuffer delete-marking operations.

changesBuffer both inserts and delete-marking operations.

purgesBuffer physical deletion operations that happen in the background.

You can set the

innodb_change_buffering variable

in the MySQL option file (my.cnf or

my.ini) or change it dynamically with the

SET GLOBAL

statement, which requires privileges sufficient to set global

system variables. See

Section 5.1.8.1, “System Variable Privileges”. Changing the setting

affects the buffering of new operations; the merging of existing

buffered entries is not affected.

The following options are available for change buffer monitoring:

InnoDBStandard Monitor output includes status information for the change buffer. To view monitor data, issue theSHOW ENGINE INNODB STATUScommand.mysql>

SHOW ENGINE INNODB STATUS\GChange buffer status information is located under the

INSERT BUFFER AND ADAPTIVE HASH INDEXheading and appears similar to the following:------------------------------------- INSERT BUFFER AND ADAPTIVE HASH INDEX ------------------------------------- Ibuf: size 1, free list len 0, seg size 2, 0 merges merged operations: insert 0, delete mark 0, delete 0 discarded operations: insert 0, delete mark 0, delete 0 Hash table size 276707, node heap has 1 buffer(s) 15.81 hash searches/s, 46.33 non-hash searches/s

For more information, see Section 14.20.3, “InnoDB Standard Monitor and Lock Monitor Output”.

The

INFORMATION_SCHEMA.INNODB_BUFFER_PAGEtable provides metadata about each page in the buffer pool, including change buffer index and change buffer bitmap pages. Change buffer pages are identified byPAGE_TYPE.IBUF_INDEXis the page type for change buffer index pages, andIBUF_BITMAPis the page type for change buffer bitmap pages.WarningQuerying the

INNODB_BUFFER_PAGEtable can introduce significant performance overhead. To avoid impacting performance, reproduce the issue you want to investigate on a test instance and run your queries on the test instance.For example, you can query the

INNODB_BUFFER_PAGEtable to determine the approximate number ofIBUF_INDEXandIBUF_BITMAPpages as a percentage of total buffer pool pages.mysql>

SELECT (SELECT COUNT(*) FROM INFORMATION_SCHEMA.INNODB_BUFFER_PAGEWHERE PAGE_TYPE LIKE 'IBUF%') AS change_buffer_pages,(SELECT COUNT(*) FROM INFORMATION_SCHEMA.INNODB_BUFFER_PAGE) AS total_pages,(SELECT ((change_buffer_pages/total_pages)*100))AS change_buffer_page_percentage;+---------------------+-------------+-------------------------------+ | change_buffer_pages | total_pages | change_buffer_page_percentage | +---------------------+-------------+-------------------------------+ | 25 | 8192 | 0.3052 | +---------------------+-------------+-------------------------------+For information about other data provided by the

INNODB_BUFFER_PAGEtable, see Section 21.29.1, “The INFORMATION_SCHEMA INNODB_BUFFER_PAGE Table”. For related usage information, see Section 14.18.3, “InnoDB INFORMATION_SCHEMA Buffer Pool Tables”.Performance Schema provides change buffer mutex wait instrumentation for advanced performance monitoring. To view change buffer instrumentation, issue the following query (Performance Schema must be enabled):

mysql>

SELECT * FROM performance_schema.setup_instrumentsWHERE NAME LIKE '%wait/synch/mutex/innodb/ibuf%';+-------------------------------------------------------+---------+-------+ | NAME | ENABLED | TIMED | +-------------------------------------------------------+---------+-------+ | wait/synch/mutex/innodb/ibuf_bitmap_mutex | YES | YES | | wait/synch/mutex/innodb/ibuf_mutex | YES | YES | | wait/synch/mutex/innodb/ibuf_pessimistic_insert_mutex | YES | YES | +-------------------------------------------------------+---------+-------+For information about monitoring

InnoDBmutex waits, see Section 14.19.1, “Monitoring InnoDB Mutex Waits Using Performance Schema”.

The adaptive hash index feature enables InnoDB

to perform more like an in-memory database on systems with

appropriate combinations of workload and sufficient memory for the

buffer pool without sacrificing transactional features or

reliability. The adaptive hash index feature is enabled by the

innodb_adaptive_hash_index

variable, or turned off at server startup by

--skip-innodb-adaptive-hash-index.

Based on the observed pattern of searches, a hash index is built using a prefix of the index key. The prefix can be any length, and it may be that only some values in the B-tree appear in the hash index. Hash indexes are built on demand for the pages of the index that are accessed often.

If a table fits almost entirely in main memory, a hash index can

speed up queries by enabling direct lookup of any element, turning

the index value into a sort of pointer. InnoDB

has a mechanism that monitors index searches. If

InnoDB notices that queries could benefit from

building a hash index, it does so automatically.

With some workloads, the speedup from hash index lookups greatly

outweighs the extra work to monitor index lookups and maintain the

hash index structure. Access to the adaptive hash index can

sometimes become a source of contention under heavy workloads,

such as multiple concurrent joins. Queries with

LIKE operators and %

wildcards also tend not to benefit. For workloads that do not

benefit from the adaptive hash index feature, turning it off

reduces unnecessary performance overhead. Because it is difficult

to predict in advance whether the adaptive hash index feature is

appropriate for a particular system and workload, consider running

benchmarks with it enabled and disabled.

You can monitor adaptive hash index use and contention in the

SEMAPHORES section of

SHOW ENGINE INNODB

STATUS output. If there are numerous threads waiting on

an RW-latch created in btr0sea.c, it might be

useful to disable the adaptive hash index feature.

For information about the performance characteristics of hash indexes, see Section 8.3.8, “Comparison of B-Tree and Hash Indexes”.

The log buffer is the memory area that holds data to be written to

the log files on disk. Log buffer size is defined by the

innodb_log_buffer_size variable.

The default size is 16MB. The contents of the log buffer are

periodically flushed to disk. A large log buffer enables large

transactions to run without the need to write redo log data to

disk before the transactions commit. Thus, if you have

transactions that update, insert, or delete many rows, increasing

the size of the log buffer saves disk I/O.

The

innodb_flush_log_at_trx_commit

variable controls how the contents of the log buffer are written

and flushed to disk. The

innodb_flush_log_at_timeout

variable controls log flushing frequency.

For related information, see Memory Configuration, and Section 8.5.3, “Optimizing InnoDB Redo Logging”.

This section describes InnoDB on-disk structures

and related topics.

This section covers topics related to InnoDB

tables.

To create an InnoDB table, use the

CREATE TABLE statement.

CREATE TABLE t1 (a INT, b CHAR (20), PRIMARY KEY (a)) ENGINE=InnoDB;

You do not need to specify the ENGINE=InnoDB

clause if InnoDB is defined as the default

storage engine, which it is by default. To check the default

storage engine, issue the following statement:

mysql> SELECT @@default_storage_engine;

+--------------------------+

| @@default_storage_engine |

+--------------------------+

| InnoDB |

+--------------------------+

You might still use ENGINE=InnoDB clause if you

plan to use mysqldump or replication to replay

the CREATE TABLE statement on a

server where the default storage engine is not

InnoDB.

An InnoDB table and its indexes can be created

in the system

tablespace or in a

file-per-table

tablespace. When

innodb_file_per_table is enabled,

an InnoDB table is implicitly created in an

individual file-per-table tablespace. Conversely, when

innodb_file_per_table is

disabled, an InnoDB table is implicitly created

in the InnoDB system tablespace.

When you create an InnoDB table, MySQL creates

a .frm file in the database

directory under the MySQL data directory. For more information

about .frm files, see

InnoDB Tables and .frm Files. For a table created in a

file-per-table tablespace, MySQL also creates an

.ibd tablespace file in the

database directory. A table created in the

InnoDBsystem tablespace is created in an

existing ibdata file, which

resides in the MySQL data directory.

Internally, InnoDB adds an entry for each table

to the InnoDB data dictionary. The entry

includes the database name. For example, if table

t1 is created in the test

database, the data dictionary entry for the database name is

'test/t1'. This means you can create a table of

the same name (t1) in a different database, and

the table names do not collide inside InnoDB.

MySQL stores data dictionary information for tables in

.frm files in database

directories. Unlike other MySQL storage engines,

InnoDB also encodes information about the

table in its own internal data dictionary inside the system

tablespace. When MySQL drops a table or a database, it deletes

one or more .frm files as well as the

corresponding entries inside the InnoDB data

dictionary. You cannot move InnoDB tables

between databases simply by moving the .frm

files. For information about moving InnoDB

tables, see Section 14.9.1.2, “Moving or Copying InnoDB Tables”.

The default row format of

an InnoDB table is

Compact. Although this row format is fine for

basic experimentation, consider using the

Dynamic

or

Compressed

row format to take advantage of InnoDB

features such as table compression and efficient off-page

storage of long column values. Using these row formats requires

that innodb_file_per_table is

enabled and that

innodb_file_format is set to

Barracuda:

SET GLOBAL innodb_file_per_table=1; SET GLOBAL innodb_file_format=barracuda; CREATE TABLE t3 (a INT, b CHAR (20), PRIMARY KEY (a)) ROW_FORMAT=DYNAMIC; CREATE TABLE t4 (a INT, b CHAR (20), PRIMARY KEY (a)) ROW_FORMAT=COMPRESSED;

For more information about InnoDB row

formats, see Section 14.14, “InnoDB Row Formats”. For how to

determine the row format of an InnoDB table

and the physical characteristics of InnoDB

row formats, see Section 14.14, “InnoDB Row Formats”.

Always define a primary

key for an InnoDB table, specifying

the column or columns that:

Are referenced by the most important queries.

Are never left blank.

Never have duplicate values.

Rarely if ever change value once inserted.

For example, in a table containing information about people, you

would not create a primary key on (firstname,

lastname) because more than one person can have the

same name, some people have blank last names, and sometimes

people change their names. With so many constraints, often there

is not an obvious set of columns to use as a primary key, so you

create a new column with a numeric ID to serve as all or part of

the primary key. You can declare an

auto-increment column

so that ascending values are filled in automatically as rows are

inserted:

# The value of ID can act like a pointer between related items in different tables. CREATE TABLE t5 (id INT AUTO_INCREMENT, b CHAR (20), PRIMARY KEY (id)); # The primary key can consist of more than one column. Any autoinc column must come first. CREATE TABLE t6 (id INT AUTO_INCREMENT, a INT, b CHAR (20), PRIMARY KEY (id,a));

Although the table works correctly without defining a primary

key, the primary key is involved with many aspects of

performance and is a crucial design aspect for any large or

frequently used table. It is recommended that you always specify

a primary key in the CREATE TABLE

statement. If you create the table, load data, and then run

ALTER TABLE to add a primary key

later, that operation is much slower than defining the primary

key when creating the table.

To view the properties of an InnoDB table,

issue a SHOW TABLE STATUS

statement:

mysql> SHOW TABLE STATUS FROM test LIKE 't%' \G;

*************************** 1. row ***************************

Name: t1

Engine: InnoDB

Version: 10

Row_format: Compact

Rows: 0

Avg_row_length: 0

Data_length: 16384

Max_data_length: 0

Index_length: 0

Data_free: 41943040

Auto_increment: NULL

Create_time: 2015-03-16 16:42:17

Update_time: NULL

Check_time: NULL

Collation: latin1_swedish_ci

Checksum: NULL

Create_options:

Comment:

1 row in set (0.00 sec)

For information about SHOW TABLE

STATUS output, see

Section 13.7.5.37, “SHOW TABLE STATUS Syntax”.

This section describes techniques for moving or copying some or all

InnoDB tables to a different server or instance.

For example, you might move an entire MySQL instance to a larger,

faster server; you might clone an entire MySQL instance to a new

replication slave server; you might copy individual tables to

another instance to develop and test an application, or to a data

warehouse server to produce reports.

On Windows, InnoDB always stores database and

table names internally in lowercase. To move databases in a binary

format from Unix to Windows or from Windows to Unix, create all

databases and tables using lowercase names. A convenient way to

accomplish this is to add the following line to the

[mysqld] section of your

my.cnf or my.ini file

before creating any databases or tables:

[mysqld] lower_case_table_names=1

Techniques for moving or copying InnoDB tables

include:

Copying Data Files (Cold Backup Method)

You can move an InnoDB database simply by copying

all the relevant files listed under "Cold Backups" in

Section 14.21.1, “InnoDB Backup”.

InnoDB data and log files are binary-compatible

on all platforms having the same floating-point number format. If

the floating-point formats differ but you have not used

FLOAT or

DOUBLE data types in your tables,

then the procedure is the same: simply copy the relevant files.

Export and Import (mysqldump)

You can use mysqldump to dump your tables on one machine and then import the dump files on the other machine. Using this method, it does not matter whether the formats differ or if your tables contain floating-point data.

One way to increase the performance of this method is to switch off autocommit mode when importing data, assuming that the tablespace has enough space for the big rollback segment that the import transactions generate. Do the commit only after importing a whole table or a segment of a table.

If you have MyISAM tables that you want

to convert to InnoDB for better

reliability and scalability, review the following guidelines and

tips before converting.

As you transition away from MyISAM tables,

lower the value of the

key_buffer_size configuration

option to free memory no longer needed for caching results.

Increase the value of the

innodb_buffer_pool_size

configuration option, which performs a similar role of allocating

cache memory for InnoDB tables. The

InnoDB buffer

pool caches both table data and index data, speeding up

lookups for queries and keeping query results in memory for reuse.

For guidance regarding buffer pool size configuration, see

Section 8.12.4.1, “How MySQL Uses Memory”.

On a busy server, run benchmarks with the query cache turned off.

The InnoDB buffer pool provides similar

benefits, so the query cache might be tying up memory

unnecessarily. For information about the query cache, see

Section 8.10.3, “The MySQL Query Cache”.

Because MyISAM tables do not support

transactions, you might

not have paid much attention to the

autocommit configuration option

and the COMMIT and

ROLLBACK

statements. These keywords are important to allow multiple

sessions to read and write InnoDB tables

concurrently, providing substantial scalability benefits in

write-heavy workloads.

While a transaction is open, the system keeps a snapshot of the data as seen at the beginning of the transaction, which can cause substantial overhead if the system inserts, updates, and deletes millions of rows while a stray transaction keeps running. Thus, take care to avoid transactions that run for too long:

If you are using a mysql session for interactive experiments, always

COMMIT(to finalize the changes) orROLLBACK(to undo the changes) when finished. Close down interactive sessions rather than leave them open for long periods, to avoid keeping transactions open for long periods by accident.Make sure that any error handlers in your application also

ROLLBACKincomplete changes orCOMMITcompleted changes.ROLLBACKis a relatively expensive operation, becauseINSERT,UPDATE, andDELETEoperations are written toInnoDBtables prior to theCOMMIT, with the expectation that most changes are committed successfully and rollbacks are rare. When experimenting with large volumes of data, avoid making changes to large numbers of rows and then rolling back those changes.When loading large volumes of data with a sequence of

INSERTstatements, periodicallyCOMMITthe results to avoid having transactions that last for hours. In typical load operations for data warehousing, if something goes wrong, you truncate the table (usingTRUNCATE TABLE) and start over from the beginning rather than doing aROLLBACK.

The preceding tips save memory and disk space that can be wasted

during too-long transactions. When transactions are shorter than

they should be, the problem is excessive I/O. With each

COMMIT, MySQL makes sure each

change is safely recorded to disk, which involves some I/O.

For most operations on

InnoDBtables, you should use the settingautocommit=0. From an efficiency perspective, this avoids unnecessary I/O when you issue large numbers of consecutiveINSERT,UPDATE, orDELETEstatements. From a safety perspective, this allows you to issue aROLLBACKstatement to recover lost or garbled data if you make a mistake on the mysql command line, or in an exception handler in your application.The time when

autocommit=1is suitable forInnoDBtables is when running a sequence of queries for generating reports or analyzing statistics. In this situation, there is no I/O penalty related toCOMMITorROLLBACK, andInnoDBcan automatically optimize the read-only workload.If you make a series of related changes, finalize all the changes at once with a single

COMMITat the end. For example, if you insert related pieces of information into several tables, do a singleCOMMITafter making all the changes. Or if you run many consecutiveINSERTstatements, do a singleCOMMITafter all the data is loaded; if you are doing millions ofINSERTstatements, perhaps split up the huge transaction by issuing aCOMMITevery ten thousand or hundred thousand records, so the transaction does not grow too large.Remember that even a

SELECTstatement opens a transaction, so after running some report or debugging queries in an interactive mysql session, either issue aCOMMITor close the mysql session.

You might see warning messages referring to

“deadlocks” in the MySQL error log, or the output of

SHOW ENGINE INNODB

STATUS. Despite the scary-sounding name, a

deadlock is not a serious

issue for InnoDB tables, and often does not

require any corrective action. When two transactions start

modifying multiple tables, accessing the tables in a different

order, they can reach a state where each transaction is waiting

for the other and neither can proceed. MySQL immediately detects

this condition and cancels (rolls

back) the “smaller” transaction, allowing the

other to proceed.

Your applications do need error-handling logic to restart a transaction that is forcibly cancelled like this. When you re-issue the same SQL statements as before, the original timing issue no longer applies. Either the other transaction has already finished and yours can proceed, or the other transaction is still in progress and your transaction waits until it finishes.

If deadlock warnings occur constantly, you might review the application code to reorder the SQL operations in a consistent way, or to shorten the transactions.

For more information, see Section 14.10.5, “Deadlocks in InnoDB”.

To get the best performance from InnoDB tables,

you can adjust a number of parameters related to storage layout.

When you convert MyISAM tables that are large,

frequently accessed, and hold vital data, investigate and consider

the innodb_file_per_table and

innodb_file_format configuration

options, and the

ROW_FORMAT

and KEY_BLOCK_SIZE clauses of the

CREATE TABLE statement.

During your initial experiments, the most important setting is

innodb_file_per_table. When this

setting is enabled, new InnoDB tables are

implicitly created in

file-per-table

tablespaces. In contrast with the InnoDB system

tablespace, file-per-table tablespaces allow disk space to be

reclaimed by the operating system when a table is truncated or

dropped. File-per-table tablespaces also support the

Barracuda file format and

associated features such as table compression and efficient

off-page storage for long variable-length columns. For more

information, see Section 14.9.3.2, “File-Per-Table Tablespaces”.

To convert a non-InnoDB table to use

InnoDB use ALTER

TABLE:

ALTER TABLE table_name ENGINE=InnoDB;

Do not convert MySQL system tables in the

mysql database (such as

user or host) to the

InnoDB type. This is an unsupported

operation. The system tables must always be of the

MyISAM type.

You might make an InnoDB table that is a clone

of a MyISAM table, rather than using ALTER

TABLE to perform conversion, to test the old and new

table side-by-side before switching.

Create an empty InnoDB table with identical

column and index definitions. Use SHOW CREATE TABLE

to see the full

table_name\GCREATE TABLE statement to use.

Change the ENGINE clause to

ENGINE=INNODB.

To transfer a large volume of data into an empty

InnoDB table created as shown in the previous

section, insert the rows with INSERT INTO

.

innodb_table SELECT * FROM

myisam_table ORDER BY

primary_key_columns

You can also create the indexes for the InnoDB

table after inserting the data. Historically, creating new

secondary indexes was a slow operation for InnoDB, but now you can

create the indexes after the data is loaded with relatively little

overhead from the index creation step.

If you have UNIQUE constraints on secondary

keys, you can speed up a table import by turning off the

uniqueness checks temporarily during the import operation:

SET unique_checks=0;

... import operation ...

SET unique_checks=1;

For big tables, this saves disk I/O because

InnoDB can use its

change buffer to write

secondary index records as a batch. Be certain that the data

contains no duplicate keys.

unique_checks permits but does

not require storage engines to ignore duplicate keys.

For better control over the insertion process, you can insert big tables in pieces:

INSERT INTO newtable SELECT * FROM oldtable WHERE yourkey >somethingAND yourkey <=somethingelse;

After all records are inserted, you can rename the tables.

During the conversion of big tables, increase the size of the

InnoDB buffer pool to reduce disk I/O, to a

maximum of 80% of physical memory. You can also increase the size

of InnoDB log files.

If you intend to make several temporary copies of your data in

InnoDB tables during the conversion process, it

is recommended that you create the tables in file-per-table

tablespaces so that you can reclaim the disk space when you drop

the tables. When the

innodb_file_per_table

configuration option is enabled (the default), newly created

InnoDB tables are implicitly created in

file-per-table tablespaces.

Whether you convert the MyISAM table directly

or create a cloned InnoDB table, make sure that

you have sufficient disk space to hold both the old and new tables

during the process.

InnoDB tables require

more disk space than MyISAM tables.

If an ALTER TABLE operation runs

out of space, it starts a rollback, and that can take hours if it

is disk-bound. For inserts, InnoDB uses the

insert buffer to merge secondary index records to indexes in

batches. That saves a lot of disk I/O. For rollback, no such

mechanism is used, and the rollback can take 30 times longer than

the insertion.

In the case of a runaway rollback, if you do not have valuable data in your database, it may be advisable to kill the database process rather than wait for millions of disk I/O operations to complete. For the complete procedure, see Section 14.23.2, “Forcing InnoDB Recovery”.

The PRIMARY KEY clause is a critical factor

affecting the performance of MySQL queries and the space usage for

tables and indexes. The primary key uniquely identifies a row in a

table. Every row in the table must have a primary key value, and

no two rows can have the same primary key value.

These are guidelines for the primary key, followed by more detailed explanations.

Declare a

PRIMARY KEYfor each table. Typically, it is the most important column that you refer to inWHEREclauses when looking up a single row.Declare the

PRIMARY KEYclause in the originalCREATE TABLEstatement, rather than adding it later through anALTER TABLEstatement.Choose the column and its data type carefully. Prefer numeric columns over character or string ones.

Consider using an auto-increment column if there is not another stable, unique, non-null, numeric column to use.

An auto-increment column is also a good choice if there is any doubt whether the value of the primary key column could ever change. Changing the value of a primary key column is an expensive operation, possibly involving rearranging data within the table and within each secondary index.